Much of what we’ve talked about was indeed correct – the bend of normal mapping and texture layers, complete with only basic shader effects and minimal surface skin shaders in general – although the core process behind creating the character models, and indeed the capturing of motion data from the actors themselves, is a far more closely aligned affair.

But how does it work?

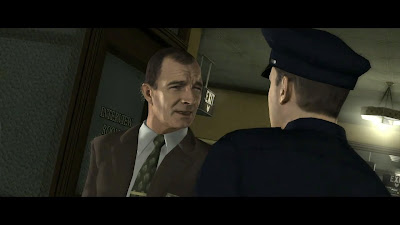

Well, on the base of it, the technique that LA Noire uses actually begins with using a detailed level of motion capturing. Dozens of points across the actor are tracked as per usual before being mapped onto geometry as a starting point, before the actor's face is digitally scanned in 3D in order to create the character model. Both the audio and visuals are recorded at the same time in order to allow for precise lip-syncing with very few obvious errors, whilst preserving most facial expressions naturally.

Afterwards, this highly detailed model is converted for use in-game. Normal and colour maps are created for a wide range of facial expressions used in animation blending. These are created from both the marker data recorded during motion capture (for the geometry animations) and from the footage and lighting of the actors faces themselves. Combined together they create a series of mappings that display elements such as wrinkles, skin details, facial features etc, which are then used to build up a complex, finished character model. They form the normal maps used in the final in-game rendering.

Basically, everything from the actor’s facial features to their actual motion captured performance is recorded to gain the most detailed animation for use in-game. Once this is done you can’t change the make up of a character’s features as this has all been recorded and set up during the capturing phase. You have to record capture for every character in the game individually. The assets created cannot be shared between different character models, or even when a character under goes certain changes to their face as the game progresses – it all has to be recorded.

Also the lighting in the capturing process - to form the imagery used in creating colour and normal maps - is fundamental to the look of the end result of the modelling and animation capture. For this process best to work, you need to set up the lighting on-stage in a similar way to how it will look in the game.

Of course it is still possible to adjust and change the lighting composition in game, affecting the shading of the character models, although the results may not be quite as accurate. Instead an image-based lighting solution – such as the one found in Need For Speed: Hot Pursuit, whereby the characters are lit by their surrounding environments – in combination with recording data of the light reflections on the actors on set would provide a solid solution to this problem.

Moving on, and we can see that both Normal and colour maps are then blended together to form more detailed facial animation on top of the motion-captured geometry. There are different normal and colour map blends for different expressions and animations, and these are combined together to form the final, fully animated facial model. Some of the normal and colour maps also have specific pre-sets, modifications if you will, for varying facial expressions and detail changes, such as adding or expanding wrinkles, muscle details etc, all based off the original capturing session.

For the system to work accurately in providing an unflinchingly realistic representation of natural facial animation, and indeed smooth lip-syncing in line with the audio – so they both look correct when combined – lots of normal maps are required along with animated geometry, which takes up a huge amount of memory.

Here you have multiple textures with varying blends of normal and colour maps in order to represent some finite details, and these have a distinctly high memory cost associated with them. Additional costs are also incurred, as blending everything together, along with animating loads of small triangles takes a fair amount of processing, which means that some compromises have to take place in order to use such a system in-game.

As a result, we can see that normal and colour map resolution are relatively low compared to the rest of the frame – roughly about 1/4 of the framebuffer resolution – in order to save on memory bandwidth and processing costs. This is perhaps why none of the characters display any kind of advanced surface shaders, although there is some evidence of phong specular highlighting present, which is barely apparent in some of these shots and indeed the trailer.

The game’s use of lighting is also fairly simply in this regard too, from what we can see from the trailer. However, this is boosted by the use of SSAO, which has been implemented to expand the use of shadowing in the game, whilst adding depth to the highly stylised, slightly washed out look that has been artistically chosen.

The use of such an advanced, high-end capturing technology for use in LA Noire is also incredibly costly from a financial point of view. Seeing as every actor has to not only be marker tracked, but also their acted lines delivered for every scene found in the game. The result is you get some of the most realistic and downright refined examples of facial animation in any videogame to date, with characters that are uncannily lifelike in this regard. What the development team in LA noire is dabbling here looks to be the future for creating cut-scenes in the majority of next-generation games.

Naturally, Rockstar’s choice in using such expensive and high-end tech is maybe just a little surprising. But when you consider what they are aiming for – to create an environment filled with natural looking characters in which to drive forward a very strong narrative– then the choice completely makes sense. You can definitely see such things being used again, possibly in the next GTA, and in other similar games which focus on strong story and characterisation.

Even this early on – with just a few screenshots and brief teaser trailers – the tech powering LA Noire is remarkable impressive. The actual game engine itself, from what we can see, may not be all that spectacular, but the delivery of the characters, their own delivery of emotion, actions, whatever else you want to call it, definitely stands high above the rest, backed up a competent rendering engine with snippets of advanced, forward thinking rendering technology.

You can check out our original tech report on the LA Noire trailer here, which provides a nice companion piece to today’s motion capture-based article.

No comments:

Post a Comment